Have you ever programmed raw machine code (not for class)? Examined a hex dump with just a hex editor (or, heck, without)? Written your own software floating-point library? Division library? Written a non-school-assignment in Lisp or Forth?

What sort of "lost arts" have been forgotten? And what reason (if any) would there be to resurrect them?

ACCEPTED]

ACCEPTED]

</sententious> - itowlson

Programming for space efficiency seems like a lost art. Everyone nowadays assumes memory is cheap. It is unless you're working with sufficiently large datasets. I'm currently working on a project involving about a 54,000 x 32,000 matrix. Needless to say, there was some creativity involved in making everything fit in memory.

Punching holes in floppy disks to make them double-sided.

Tweaking your config.sys & autoexec.bat files to squeeze every free byte you possibly could from your 640Kb, so a game would run.

Very similar to that scene in Apollo 13 where they are trying to cut as much as possible out of the normal startup process due to low power!

Performance as a feature, not as an afterthought.

Reverse engineering through disassembly... would get back to me though. SoftIce anyone ;-)

Why would it be useful? It gets one to understand the inner-workings of a machine rather well... and allows one to understand Client languages (e.g. C,C++) more easily.

The ability to work without constant access to online reference material (and Google and Stackoverflow).

The Story of Mel [1] is a classic of the lost arts... in blank verse, yet.

[1] http://www.cs.utah.edu/~elb/folklore/mel.htmlMel loved the RPC-4000

because he could optimize his code:

that is, locate instructions on the drum

so that just as one finished its job,

the next would be just arriving at the "read head"

and available for immediate execution.

There was a program to do that job,

an "optimizing assembler",

but Mel refused to use it.

Debugging a program via blinking lights.

(Or blinkenlights [1], if you prefer)

[1] http://www.catb.org/~esr/jargon/html/B/blinkenlights.htmlWriting self modifying code !

Interrupt(int x) function? Intel was nice enough to only put in int imm8 for the interrupt instruction, so if you needed a dynamic interrupt number, it was pretty much forced upon you. - Earlz

My first assembler was pirated from an Intel 8080 development system. I printed the hex dump on an ASR33 teletype and entered it by hand into my SOL80. I hand edited the I/O functions to use the SOL's cassette drive.

The SOL80 was build from a bag of 74LSxx parts and an 8080.

I relocated the SOLUS monitor from $C800 to $F800 so I'd have more room to run a (modified) UCSD Pascal system.

I don't think that hex editors existed back then. At least I didn't have one.

I wrote my first C compiler to run in 48K of memory: 5 passes: pre-processor, parser, improver (not really), code generator, and assembler. For the 6809. Yes, floating point in software and divide as well.

I ran (almost) version 7 Unix on a PDP11/23 (Venix), with a compiler written by Ritchie.

I ran BSD Unix on a VAX. With a 9-track tape drive.

I had a SparcI, a Lisa, the original Mac.

That was in the first 5 years. A lot happened since then.

You guys (at least some of you) have missed a lot.

I still have a warm and fuzzy for Peeks and Pokes.

Reading code—somebody else's code.

This is one of the best ways to learn. But learn on many different axes in one piece of code wherein you might be beset by bad 'style' but still learn a great algorithm, fight with spaghetti code just to find the secret sauce of the data structure, or ignore the code to see the patterns.

Parking hard disk's heads :)

Landing zones and load/unload technology [1]

Do you remember DOS park utility?

[1] http://en.wikipedia.org/wiki/Hard_disk_drive#Landing_zones_and_load.2Funload_technologyUsing DOS extender (such as EMX/XMS) drivers to access memory beyond 640KB.

In the early days we did really weird things:

"Think before act"

Not so long ago, processing time was expensive and you had to make sure that your program would run on the first try. You're asked to create basic paper documentation, get it reviewed, code your program and, finally, run it.

Now, everybody has a hammer.

Charging money for your code!

Designing Ansi/Ascii screens for BBS's!!!

Javascript seems like a lost art these days. I couldn't count the number of questions on Stack Overflow which read something like this:

How do you add two numbers using jQuery?

After five years of Eclipse, my vi-fu is seriously degrading. Combine that with a terminal without cursor keys, and I am in a bit of trouble.

Classic BASIC (before QuickBasic), with line numbers and GOTOs and GOSUBs, but without any loops other than FOR loops (because you used IF and GOTO to implement WHILE loops). Allowed for the best spagetti in town.

Writing code by wiring individual diodes because (a) you happen to have them laying around and (b) your parents don't actually give you enough allowance to let you buy an EPROM burner, and you're not quite yet skilled enough to build one yourself. Unfortunately the resulting programs are rather short. But the output can be read on the homemade logic probe!

Ah, misspent youth.

Classic viruses that embed themselves in other executables. I haven't heard of any modern malware that's not related to controlling botnets and sending spam, and certainly no malware comes close in complexity to ZMist [1].

P. S.

No, this should not really be resurrected. Only for academic purposes.

[1] http://en.wikipedia.org/wiki/ZMistFixing the partition table and FAT file system by hand using a simple hex editor.

Writing a small linux application in C from scratch to restore a damaged RAID set (3 of 4 disks had hardware errors when the server crashed).

+1 for Threading reel-tapes. Been there, done that. (Tandberg tape drive for Norsk Data servers)

Opening COMMAND.COM in an editor and changing the error messages.

'Bad Command or Filename' to 'Deleting C:*.*. Please Wait'.

Two things:

To rephrase the last point: more often than not I find myself doing trial-and-error bugfixing rather than trying to understand why things are happening.

Just because computers are fast it does not always mean that it will be faster to try out all possible combinations. Even though I never lived them, I miss not having the discipline of "the old days" when you wanted to make sure your program was correct because you might have only one single chance to compile it and run it that day.

Programming to handle out-of-memory errors and allow the user to recover gracefully.

When I was programming the mac, each call to malloc would be followed by a check to see if an out of memory error occurred, and if so, to handle the error in a way that would warn the user, allow them to save work, and quit. Basically we would keep around a block of memory for this purpose, and free it up so it would be available for saving.

Of course, if the user ran out of memory a second time, he /she was SOL.

Locking main memory to prevent concurrent access.

The mac had these things called "Handles". Basically they added an extra level of indirection to all memory access, so that they could recompact memory - move it around. Since you only stored the "Handle" - a pointer to a pointer to memory - and not a pointer itself, this was possible.

However, you would need to lock memory down whenever accessing it, to prevent it from moving around, then release the lock afterwards.

It was a royal pain in the butt :)

Squaring up punch cards to optimize the chance of it making through the feeder without flying all over the place.

line-oriented editors

At my undergraduate school, we had to write a compiler - it was basically a 2 foot high printout of code, written from scratch, by a team of 3-4. To make things worse, we didn't use yacc, but a tool written by the instructor, who also worked on compilers for IBM.

We had to do this entirely with "ed" as "vi" was deemed too computationally intensive to be used by everyone.

I did get really good at regular expressions though, as the fastest way around a file was by search, and it was even faster to do a text match, bind, and substitution. Of course I was doing this pretty much blind, as I didn't even bother to see what I was working on. It was really a lot faster than using a mouse, cut and paste, etc. and luckily vi has the same capabilities.

It's a lot harder to do it, though, without being able to see the screen :) You COULD print out lines of code, but you weren't really "operating" on it.

fixed blocks do? - Michael Myers

Palette-based graphic techniques like palette-cycling animation. I miss the 'old days' of graphics programming until I have to do bitmap rotations and alpha transparency at a remotely usable speed :)

Bootstrap loading a computer...

A lot of flight simulators still use the old Encore workstations in a Master/Slave configuration where you have to punch in a series of instructions in Hex on the keypad to load them.

Watching someone do this, or doing it myself (I'm only 25) is like stepping back in time into the computer room in Golden Eye where you're surrounded by blinking lights, tape backup drives, and Printronix printers.

Lets just say, when you do finally get it to load right (because it doesn't alway take on the first permutation) it's hard not to do an "I am invincible!".

Then later we progressed to:

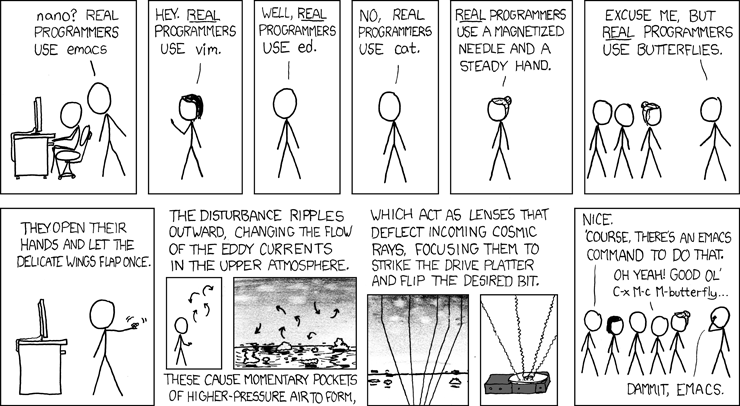

Butterflies.

Paging though the disk device to recover your work after rm * .o

strings my go-to tool for disk recovery. - pavpanchekha

For a physics class I once had to enter the machine op codes using a hex keyboard.

Woz did some really genius things in his designs. Creative ways to share memory. Also, the way he found out how to have the apple 2 display in color using a quasi-phase shifting technique. Building new hardware and writing drivers for it (graphics display, keyboard/driver).

He did some really cool stuff. Very inspirational!

Also, cool when guys repurpose wii remotes and other hardware.

Handling the ray reverse event from a EGA monitor to make UI animations smooth.

Word alignment and Endian. Two bugbears that I repeatedly watch people come a cropper over while starting out in embdded system programming (and then have to help out).

We used to debug via different frequency Beeps from the PC speaker. So the windows service would play a variety of tunes depending upon what it was doing (seriously, and not my idea). It even made it into a rather large mining company.

Sidenote: The funny thing is, when we eventually took it out, the customer complained and we had to reinstate the functionality!!!

Whistling into the phone to check whether mainframe was "up" before connecting phone to acoustic coupler.

Buying a terminal emulator package only to read in manual that I had to write a Z80 device driver before the program would work (and then writing it).

The important difference between byte pointers and word pointers on Data General machines.

Debugging IBM Assembler code from an ABEND dump.

There are none.

The golden age of computing, and hence the lost and arcane arts was the brief period between Babbage getting the Difference Engine on-line and Ada Lovelace saying to him, "Hey Charley we can probably use this win on the horses!".

Since then it has either been downhill, or uphill or pretty well flat depending on your point of view and how rose tinted your spectacles are..

Writing an Intro for the C64, where each cpu tick was aligned to 8 pixels drawn on the screen, and every instruction in the cpu tock 1-3 cpu ticks. You wrote an interrupt for the screen hitting a given horinzontal line, and the read the current horizontal position of the cathode ray in the monitor (which could be on one of three position, depending on the length of the currently executed intruction in the cpu while the interrupt fired). The you jumped into a list of NOPs (one instruction each), depending on the cathode ray location to pixel align the excution flow of your program to the redraw of the screen. Then you switched background colors on each screen line to implement bouncing bars for your scrolltext.

Great great times.

The ability to take a program that was approx. 40k assembly instructions and shrink it by half to fit on a smaller computer (I can't remember the name of the person that did this but it was someone who worked on the macintosh i think).

Typing over 50 pages of code from dot matrix paper into a Tandy 1000 to reprogram it completely after it was corrupted... Only to find that it didn't fix anything.

From what I've seen:

Embedding assembly programs inside Basic programs on the TRS-80. It had to be done very carefully because you never knew where it would be executing--relative jumps only. Furthermore there were a few values that would break the structure and likely take out your whole program. Writing code without being allowed to use a zero was a pain.

Getting the teacher to leave alone any line with embedded stuff was even more of a pain. On the Mod Is they displayed gibberish and couldn't be edited by the normal editor. In class we only embedded graphics but she was determined to figure out how it worked and couldn't get it through her head that making no changes in the editor would still trash the line.

Graphics programming with BGI

int gdriver = DETECT, gmode, errorcode;

initgraph(&gdriver, &gmode, "");

errorcode = graphresult();

if (errorcode == grOk) {

...

}

Edititng punch cards with sticky tape!

Impelmenting simple "delete" and "insert" edits on a card copy machine by stopping the appropriate card moving with your finger.

Programming in AMOS, that's what got me hooked on programming.

Debugging DSP software with only a logic analyser to watch the program address & data bus, then manually disassembling the hex into TMS320 assembler to work out where we'd got to. You had to remember how deep the instruction queue was so that you knew how many instructions to ignore when you hit a branch.

Plus the beautiful old TMS320s had a pin called "XF" (External Flag) and you would use the SXF and RXF instructions to flip it to a 1 or a 0, and then stick a logic probe on the pin to see what was happening -- a 1-bit debugging interface!

Erasing and reprogramming UV EPROMS.

Using a DSL framework to generate a generator-DSL, to code a generator which generates DSLs for the domain model.

Using TSRs and switching between Operating systems within a running program! I had to acquire data on an old CAMAC system running AMCA-80 on a Z-80. There have been no data storage devices for that system. The machine could run CP/M as well and for that we had a single floppy drive. We managed to switch from AMCA-80 to CP/M in order to store the acquired data on the floppy and than jump back for the next run. This was the time when I new every single byte by name ;-) and used tokens and short variable names to refer to string fragments in order to conserve RAM. Those where the times...

Compiling from unsaved code in the editor's buffer - Turbo Pascal had this feature. If the code ran, you saved the file. Otherwise your system crashed; you would then have to reboot and start over.

Learning through sleepless nights and trial and error instead of through spoon-feeding tutorials and screencasts.

How to program keypunch drums.

Getting Domino's to deliver to the keypunch line (the line of people waiting for their turn at a keypunch)

What to do with buckets of chafe (the holes punched out of cards).

Mode 0Dh EGA graphics [1] programming for DOS. Among the plethora of examples out there covering Mode 13h VGA (which, I freely admit, kicks ass), good luck finding a working example(*) that shows how to plot pixels in EGA.

Now you might be thinking, "yeah, whatever, EGA deserves to die." Fair enough, but perhaps you haven't played Quest for Glory [2], one of the greatest DOS games ever made. Or what about King's Quest IV [3], released in 1988, a cutting edge game that pushed the limits of home user PC hardware at the time. This stuff is a huge part of my heritage as a programmer.

(*) P.S. If you know of such an example, please help [4]. :)

[1] http://en.wikipedia.org/wiki/Enhanced_Graphics_AdapterMine would have to be writing a TSR to trap the Ctrl+Alt+Del key..I still have it lying around somewhere...

The other is writing a Screen Design Aid in Borland C, this was inspired from the computer diploma course, that had the AS/400 mainframe system, a program called SDA on the AS/400, in which you could draw a data screen for inputs, interacting with COBOL and RPG IV, in college days (1996)...

It was that inspired me to write a program to write directly to the Video memory (remember 0xb800:0000 for colour and 0xb000:0000 for monochrome vga?) for fast writing/reading of the memory and dumping it to disk, then wrote a small library to read the dumped data back out into video memory, this includes the ascii lines and corners..

Remember those flashy boxes generated by entering ALT+240-250 somewhere...still have it on my netbook as I type this...cool! :D And creating a file on the DOS command line that was impossible to delete because there was a hidden ALT+254 character which was an ASCII blank and deleting it would fail. :)

edlin FILE[ALT+254].TXT .... DEL FILE.TXT Invalid command or filename.

Edlin was some editor, in fact I did grow to like it...

Happy days, Best regards, Tom.

In my experience, strong kung-fu at the command line is a lost art among many IDE-oriented developers. I often help people with problems that are simple if one knows (a) Bash (b) the Unix find command and especially (c) regular expressions.

Concerns for PERFORMANCES, SECURITY, FOOTPRINT, CLARRITY as important features.

These are just board-effects of a clean design.

Without this "lost art", this guy would not be able to piss off so many billionaires:

A brilliant demonstration that "lost arts" are still relevant.

When I was a computer operator I altered a program while it was running to change the 'DISTY' instruction that was spewing out messages on the teletype to a 'NOOP', finished early and went to the pub. The other operator bought the beer.

Programming without having an online-api-help available but required reading a printed api-dokumentation.

Using an oscilloscope to reverse engineer communication protocols no one ever documented.

Manual quicksort - Dropping a stack of punch cards (say, 200 of them) and manually quicksorting them as a result.